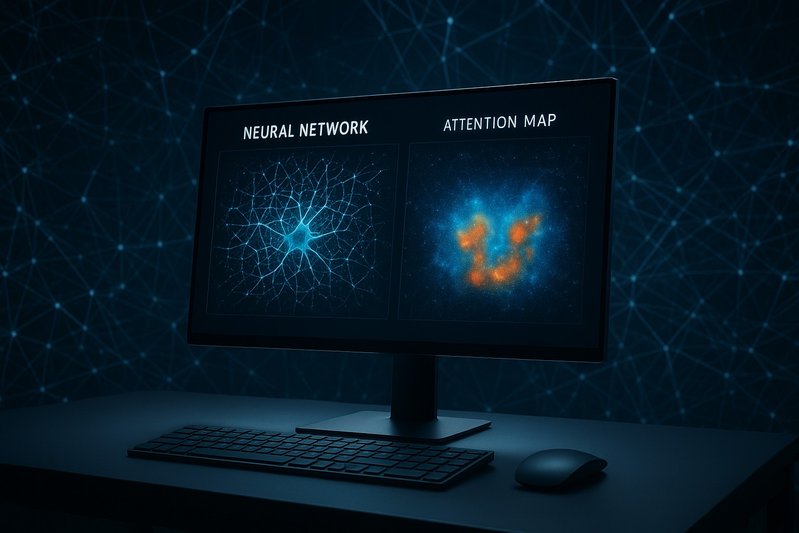

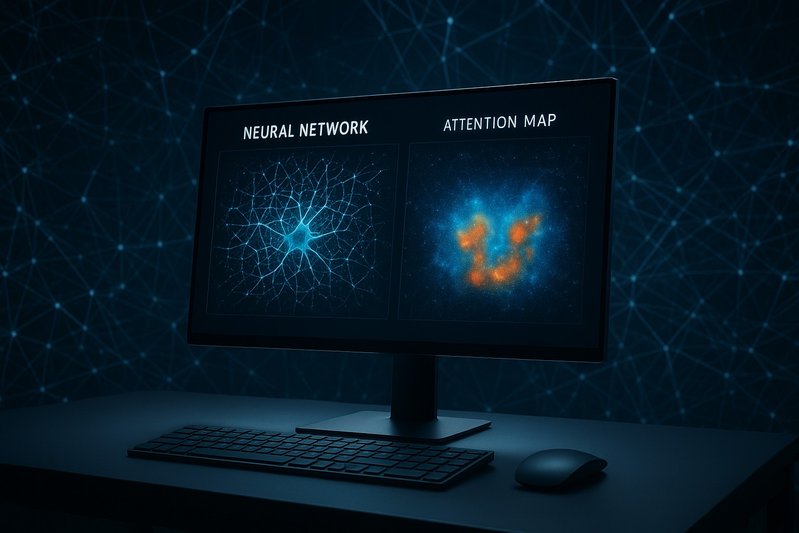

Causal Interventions and Sparse Features Outperform Attention Maps in Reasoning LLMs

Explore how causal interventions and sparse features surpass traditional attention maps in LLMs, affecting reasoning in AI systems.

7 articles

Explore how causal interventions and sparse features surpass traditional attention maps in LLMs, affecting reasoning in AI systems.

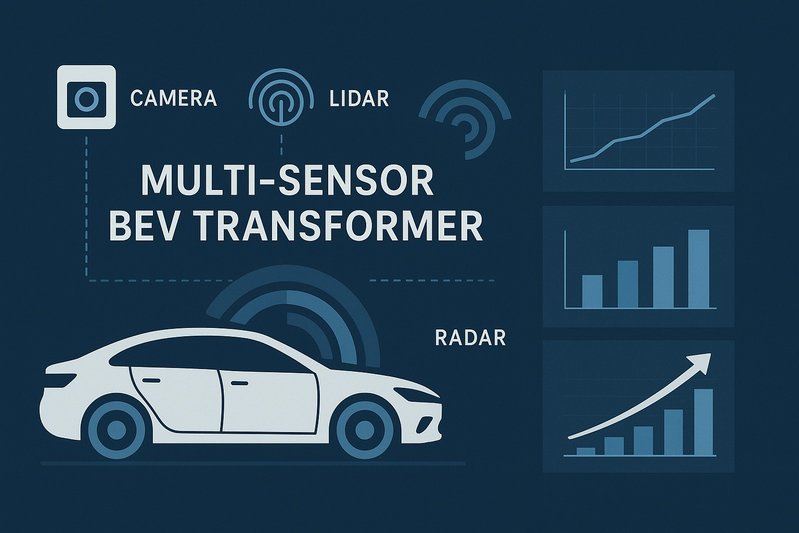

Explore how multi-sensor BEV transformers outperform task-specific detectors on nuScenes and Waymo datasets, enhancing perception in autonomous vehicles.

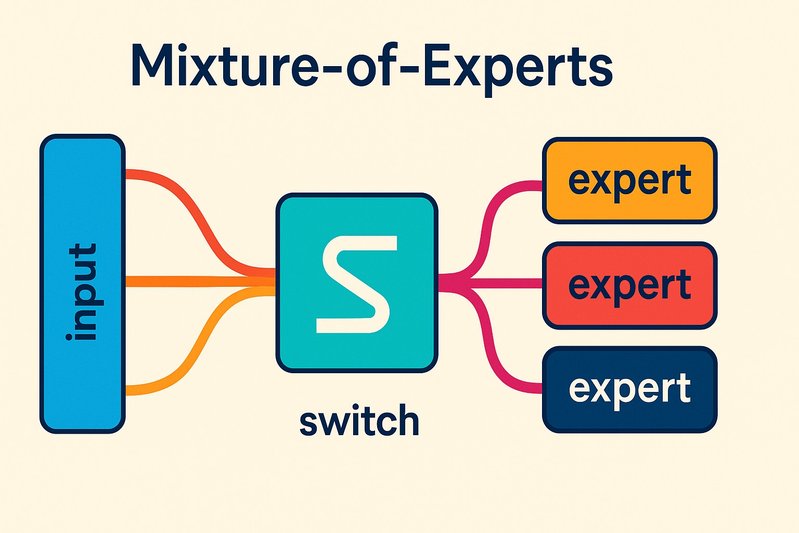

Explore how top-1 routing and expert pruning can drastically enhance MoE performance, reducing compute by 50% with optimal runtimes.

Discover the future of transformers in AI, exploring efficiency, scalability, and emerging research paths in this dynamic field.

Explore how low-precision numerics in AI models are transforming business sectors and their economic impacts with the adoption of transformers.

Dive into the advancements of FP8 and INT8 quantization in transformers, exploring their impact on performance and efficiency in machine learning.

Ad space (disabled)

Explore how time-series transformers and innovative models are shaping the future of stock market predictions in finance and technology.

Ad space (disabled)

Vous pouvez choisir quels cookies vous souhaitez autoriser. Certains cookies sont nécessaires au fonctionnement du site.

Ces cookies sont essentiels au fonctionnement du site (navigation, préférences de langue, etc.).

Nous aident à comprendre comment les visiteurs utilisent notre site pour l'améliorer.

Permettent d'afficher des publicités pertinentes. Requis pour afficher Google AdSense.