Tail-Accurate Observability for Gemini Pipelines: OpenTelemetry, Streaming SLIs, and Bias‑Resistant Benchmarking

The hardest problems in real-time AI aren’t in the models—they’re in the tails. In production Gemini pipelines, a few slow requests can sink user experience, destabilize streams, and burn error budgets. What matters is not an average, but the shape of the distribution: TTFT outliers, streaming stalls, queue lag rollups, and CPU/GPU plateaus that signal a throughput–latency knee. As teams move from demos to always-on pipelines—spanning streaming, multimodal inputs, tool calling, RAG, and long-context prompts—the signals must be precise, causal, and statistically defensible.

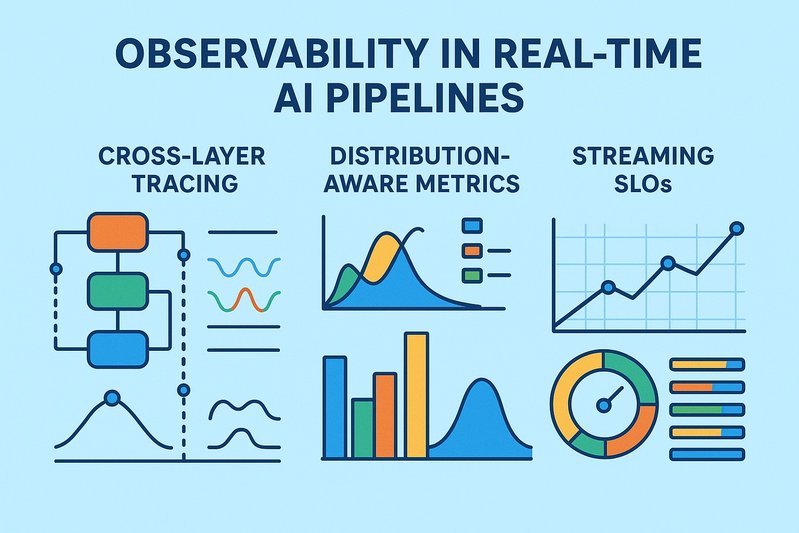

This article details a practical blueprint for tail-accurate observability and repeatable benchmarking of Gemini-based real-time pipelines. It lays out end-to-end SLIs (including TTFT/TTLT and cold vs warm delineation), a cross-layer trace model that stitches HTTP/gRPC with Pub/Sub and Kafka, a distribution-aware metrics schema for latency and tokens, and a methodology that prevents measurement bias with open-loop arrivals and coordinated-omission safeguards. It also covers throughput–latency knee detection with GPU/TPU/CPU/memory telemetry, an error taxonomy that treats safety-blocked responses explicitly, and interface-level performance considerations when comparing the Gemini API and Vertex AI under matched quotas and rate limits. Readers will get a working model for instrumentation, performance testing, and operational decision-making that holds up to statistical scrutiny.

Architecture/Implementation Details

End-to-end SLIs that preserve tail fidelity

Real-time LLM performance lives and dies by clear, unambiguous SLIs:

- Latency percentiles (p50/p95/p99) measured end-to-end from client send to final byte for non-streaming.

- For streaming, define time-to-first-token (TTFT) from client send to first token emitted and time-to-last-token (TTLT) from send to stream completion.

- Track cold vs warm latencies to avoid mixing first-invocation cold starts with steady-state traffic; cold starts get their own counters and, ideally, their own SLOs.

- Throughput and capacity SLIs include QPS, concurrent active streams, and tokens/sec during streams. Where the API returns usage metadata, align token accounting with Gemini’s token guidance and quota boundaries.

Reliability and availability must segment error classes: transport/timeouts, 4xx vs 5xx, safety policy blocks, and rate-limit responses. Availability is the ratio of successful outcomes over the SLO window; per SRE practice, exclude client-side faults, while keeping rate-limit and safety classes visible as distinct outcomes.

Streaming performance anatomy for Gemini

Streaming changes both the user experience and the measurement model. Gemini supports server-sent events (SSE) and SDK streaming. Key behaviors to capture:

- TTFT is the earliest signal of responsiveness; streaming typically reduces perceived latency by flushing tokens as they are generated.

- TTLT depends on output length, downstream tool invocations, and client-side parsing. Measure tokens/sec as a rolling rate per active stream and watch its stability under concurrency.

- Client backpressure matters: bound concurrent streams and observe how TCP/network, JSON/event parsing, and throttling policies affect both TTFT and tail TTLT.

Cross-layer trace model for causal stitching

Distributed tracing must stitch across synchronous RPCs and asynchronous messaging to reconstruct causality:

- Root span: client→gateway request. Attributes should include model_name, model_version, interface (gemini_api|vertex_ai), mode (streaming|non_streaming), modalities (text|image|audio|video), input_tokens, expected_output_tokens, and prompt_size_bytes.

- Child spans: tokenization; safety/guardrails; the Gemini inference call; tool invocations (HTTP/database/vector) with latency and status; and RAG retrieval (query_latency, k, index_version).

- Streaming: represent the receive loop as a span enriched with TTFT, per-chunk counts, and tokens/sec observations.

- Messaging: create producer publish spans (topic, message_id, partition/offset or ack_id) and consumer receive/ack spans. Use span links, not strict parent-child relationships, across Pub/Sub and Kafka boundaries to preserve causality for fan-out and async processing.

- Propagate W3C tracecontext across HTTP/gRPC headers and message attributes. Export via the OpenTelemetry Collector; use a centralized backend for time-synced analysis.

Metrics schema for distribution-aware tails

To diagnose tails, rely on histograms designed for accuracy in the extreme percentiles:

- End-to-end latency histogram: request_latency_seconds{workload_id, interface, streaming, modalities}

- TTFT histogram: ttft_seconds{workload_id, interface, modalities}

- Token counters: input_tokens_total, output_tokens_total; per-stream tokens_rate gauges

- Queue/stream progress: pubsub_undelivered_messages, pubsub_oldest_unacked_seconds; kafka_consumer_lag, partition_skew; dataflow_watermark_lateness_seconds

- Error/availability: request_errors_total{class}, availability_ratio

- Resource telemetry: cpu_usage, memory_working_set, gc_pause_seconds, gpu_utilization, gpu_memory_used, tpu_utilization, and network I/O

- Cost: request_count_by_sku and downstream computation of cost per request/token with billing data joined to request counters (specific cost metrics unavailable in this article)

Enable exemplars with trace IDs on histogram buckets so p99+ samples jump directly to the relevant distributed traces for root cause. This tight loop—chart to trace—shortens tail diagnosis dramatically. 🔬

Comparison Tables

Interface and response mode: what to measure and why it matters

The following comparisons highlight what to keep constant and what to watch when benchmarking Gemini-based pipelines. They point to tendencies you should validate under your own workloads and quotas.

| Dimension | Configuration A | Configuration B | Measurement focus | Typical tendencies (to verify) |

|---|---|---|---|---|

| Interface | Gemini API | Vertex AI | TTFT, p95/p99 latency, error/availability, rate-limit behavior, cost attribution | Parity in core latency under matched quotas; Vertex AI layers enterprise controls and integrated ops |

| Response mode | Non-streaming | Streaming | TTFT, TTLT, tokens/sec, client backpressure | Streaming lowers TTFT; TTLT follows output length; watch client parsing CPU and stream concurrency |

| Ingress | Pub/Sub | Kafka | Queue lag vs consumer lag, end-to-end latency, backpressure signals | Both can hit low-latency envelopes; operational metrics and control levers differ |

| RAG store | Matching Engine | BigQuery Vector | p95/p99 query latency, index freshness, throughput | Matching Engine tends to optimize large-scale ANN latency; BigQuery supports SQL+vector fusion |

| RAG store | AlloyDB pgvector | Matching Engine | Latency vs transactional features | AlloyDB pgvector aligns with transactional patterns; Matching Engine suits web-scale recall |

| Accelerators | CPU-only | GPU/TPU adjunct | Throughput vs latency knees, utilization, cost/request | Accelerators improve throughput and reduce latency when utilization sustains above ~60–70% (specific thresholds vary) |

Keep quotas and rate-limit policies matched across interfaces for a fair comparison. Record rate-limit classes and retry behavior as first-class outcomes, not noise to be filtered.

Best Practices

Tail diagnosis: exemplars and trace-linked percentile confidence

- Use histogram-based quantiles (HdrHistogram-style or native histograms) to estimate p95/p99/p99.9 without losing tail fidelity.

- Attach exemplars so percentile charts link to traces. Inspect the spans attached to TTFT outliers, Gemini call latencies, or downstream tool/RAG spikes.

- Quantify uncertainty: compute confidence intervals for percentile estimates (e.g., bootstrap). Report effect sizes and confidence bounds when claiming performance improvements; avoid single-run anecdotes.

Bias-resistant load: open-loop arrivals and warm-up vs steady state

- Prefer open-loop arrivals (constant or Poisson RPS) to break the feedback between service time and arrival rate. This avoids coordinated omission that would otherwise hide real tail inflation during overload.

- Separate warm-up from steady-state measurement windows; do not mix cold and warm in the same distribution.

- Sweep context size, number/size of retrieved chunks, and media sizes systematically. Record token usage to correlate TTFT/TTLT sensitivity to input length and streaming chunking.

Throughput–latency knee detection and resource attribution

- Plot throughput vs latency and look for the knee where tail percentiles rise sharply. Overlay GPU/TPU/CPU utilizations, memory pressure, GC pauses, and network I/O.

- Use DCGM-based GPU metrics on GKE or Cloud TPU Monitoring where relevant; correlate utilization and memory headroom with tokens/sec stability and TTFT drift.

- For streaming, monitor concurrent active streams, tokens/sec variance, and client CPU parsing overhead. Backpressure at the client can show up as TTFT/TTLT stalls or dropped tokens.

Queue and watermark health for streaming pipelines

- Pub/Sub: undelivered messages and oldest unacked age indicate consumer lag and risk to latency SLOs.

- Kafka: consumer lag per group/partition, ISR counts, and partition skew surface early imbalance and backlog.

- Dataflow/Beam: watermark lateness, backlog size, and autoscaling signals show whether event-time guarantees are slipping. Escalating watermark lateness should trigger backpressure or shedding policies upstream.

Error taxonomy, availability, and retry hygiene

- Classify errors explicitly: 4xx vs 5xx, timeouts, safety policy blocks, and rate-limit responses. Treat safety blocks as reportable outcomes with separate accounting from transport/server failures.

- Availability is the success ratio over the SLO window, typically excluding client-side faults but including rate-limit classes as first-class signals for capacity planning.

- Apply exponential backoff with jitter; cap total retry time; prevent retry storms under partial failures. Show that retry policies don’t amplify tails or consume error budgets prematurely.

Interface-level considerations: Gemini API vs Vertex AI

- Keep payloads, prompts, and quotas matched; record rate-limit responses distinctly. Measure TTFT, TTLT/end-to-end latency, tokens/sec, errors/availability, and cost attribution.

- Vertex AI typically includes IAM, VPC-SC, and observability integration that can simplify enterprise operations and cost attribution. Benchmark with those controls enabled if they’re part of the required deployment posture.

Statistical rigor for claims

- Use sufficient sample sizes for robust p99/p99.9 estimates; do not average percentiles.

- Replicate runs and demonstrate stability across replications. Claim improvements only when confidence intervals do not overlap or when effect sizes are meaningful relative to SLO thresholds.

- Publish pass/fail criteria before the test. For example, p95 end-to-end ≤ 800 ms for text non-streaming, p95 TTFT ≤ 200 ms for streaming, and p99 TTLT ≤ 2.5 s under steady-state conditions. Adjust values per workload and modality.

Instrumentation and dashboards that drive action

- Standardize OpenTelemetry across the client, gateway, orchestrators, RAG/vector stores, and tool integrations. Propagate tracecontext across RPC and messaging boundaries; export to a central trace backend.

- Use Prometheus-compatible metrics with exemplars for latency, TTFT, tokens/sec, queue lag, watermark lateness, error classes, availability, cold starts, and cache hits. Export to a managed Prometheus service and wire exemplars to your tracing backend for one-click tail investigations.

- Build dashboards for end-to-end percentiles, TTFT/TTLT, concurrency, tokens/sec stability, error class trends, and resource/capacity overlays. Include quick links from p99 buckets to traces.

- Alert on SLO burn rates using multi-window policies (fast 5m and slower 1h windows). Add queue lag and watermark lateness alerts aligned to latency SLOs. Canary alerts should be tighter and more sensitive.

Load generation and tooling

- Use open-loop-capable tools and executors for HTTP/gRPC and streaming paths. Options include k6 for arrival-rate executors with streaming patterns, Locust for orchestration-heavy user flows (with custom shapes), Vegeta for constant RPS, and CO-safe tools like wrk2 where applicable.

- Honor published quotas and model-specific rate limits. Cap concurrency and stream counts to reflect realistic client limits; measure client CPU/network impact under peak rates.

Conclusion

Tail-accurate observability for Gemini pipelines relies on clear SLIs, cross-layer tracing, and distribution-aware metrics that survive the complexity of streaming, multimodal, RAG, and long-context workloads. The cornerstone is OpenTelemetry-driven causal stitching across HTTP/gRPC and messaging, paired with histogram-based quantiles and exemplars to investigate the p95/p99/p99.9 tails quickly. Bias-resistant benchmarking with open-loop arrivals, warm-up separation, and replicated runs turns anecdotal performance into evidence. And by detecting the throughput–latency knee and attributing it with GPU/TPU/CPU/memory/network telemetry, teams can make confident capacity and optimization decisions.

Key takeaways:

- Define TTFT/TTLT, cold vs warm, and error classes explicitly; measure them with histogram-based quantiles and exemplars.

- Use span links to preserve causality across Pub/Sub and Kafka; enrich spans with RAG/tool attributes and streaming TTFT.

- Avoid coordinated omission with open-loop arrivals; isolate steady state and compute percentile confidence intervals.

- Correlate latency knees with GPU/TPU/CPU/memory/network telemetry and queue/watermark signals.

- Benchmark Gemini API vs Vertex AI under matched quotas and rate limits; treat rate-limit and safety outcomes as first-class metrics.

Next steps:

- Instrument critical paths end-to-end with OpenTelemetry; enable exemplars and centralized trace export.

- Stand up dashboards for latency/TTFT/TTLT with trace links; add queue/lag/watermark and resource overlays.

- Establish open-loop load tests with a predeclared pass/fail matrix; run replicated experiments and publish bootstrap CIs.

- Calibrate SLOs per workload and modality; adopt multi-window burn-rate alerts and canary analysis for safe releases.

The payoff is operational clarity. With tail-accurate signals, statistically defensible tests, and cross-layer visibility, Gemini pipelines move from promising demos to dependable, real-time systems at scale. ⚙️