Redis‑Backed Action Cable and Tuned Worker Pools Drive Deterministic Real‑Time Performance in Rails 7.1+

Real-time Rails isn’t just “good enough” anymore—it’s fast, predictable, and operationally mature when configured with intent. Enable permessage‑deflate and compressible frames shed 40–80% of their bandwidth burden; tune an undersized Action Cable worker pool and throughput climbs 1.5–3× with cleaner p95/p99; switch from PostgreSQL LISTEN/NOTIFY to Redis pub/sub and multi‑node fan‑out headroom jumps 3–10×. These are not edge‑case gains. They’re the natural result of Rails 7.1+ leaning into a straightforward reactor‑plus‑worker‑pool model, a hardened Redis adapter, and Hotwire/Turbo Streams ergonomics that move expensive rendering off the hot path.

This technical deep dive explains how Action Cable’s architecture translates into tail‑latency discipline, what the Redis adapter delivers in practice, and why LISTEN/NOTIFY remains niche. It walks through Turbo Streams’ …_later offload path, WebSocket compression trade‑offs, and the realities of backpressure and slow consumers. It also covers subscription lifecycle correctness, client‑side efficiency patterns, and how to interpret p50/p95 latency and throughput ceilings to avoid chasing the wrong bottleneck. Expect practical configuration examples and tables that make the trade‑offs explicit.

Architecture/Implementation Details

The reactor–thread model and why it tames tail latency

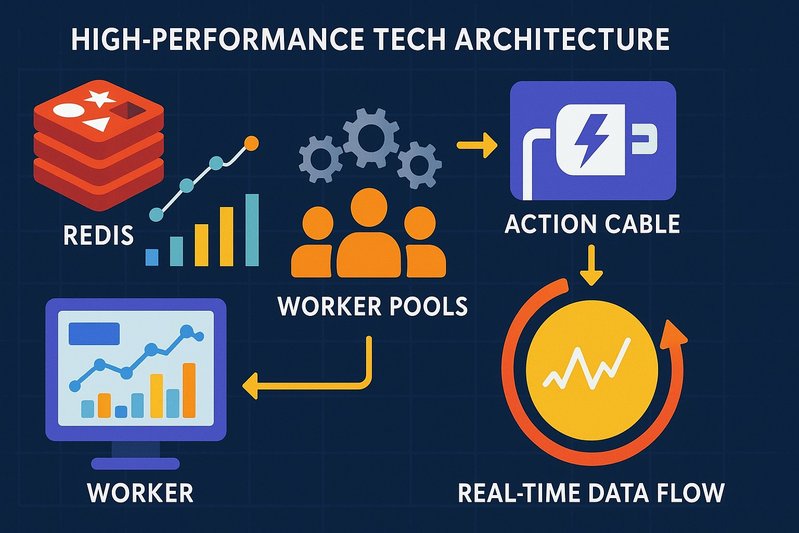

Action Cable separates concerns cleanly: a nonblocking WebSocket I/O loop handles frames, while a Ruby worker pool executes channel callbacks—subscribed, unsubscribed, perform—and processes inbound messages. The default worker pool is intentionally small (commonly around 4), which is friendly to small apps but a liability under bursty fan‑out. When the pool is undersized, work queues build up, and p95/p99 latencies inflate despite a seemingly idle I/O reactor.

flowchart TD;

A[WebSocket I/O Loop] -->|handles| B[Frames];

A --> C[Worker Pool];

C -->|executes| D[Channel Callbacks];

D --> E[Incoming Messages];

C -->|spawns| F[Threads];

F -->|processes| G[Work Queues];

G --> H[Tail Latency];This flowchart represents the reactor-thread model of Action Cable, illustrating how the WebSocket I/O loop interacts with worker pools, executes channel callbacks, and processes incoming messages to manage tail latency effectively.

Right‑size the pool, and tail latency snaps back to predictable. Increasing a too‑small pool to 8–16 threads typically yields a 1.5–3× throughput increase and lowers p95 until you hit the next constraint (CPU, Redis, or NIC). Over‑sizing, however, backfires: thread contention goes up and co‑located HTTP endpoints can be starved if Action Cable shares a Puma cluster.

Configuration is straightforward:

# config/environments/production.rb

config.action_cable.worker_pool_size = 8Pair that with a sensibly provisioned Puma—workers ≈ CPU cores, 8–16 threads per worker, preload_app!—and you get predictable concurrency for mixed HTTP + WebSocket workloads. Many teams also isolate Action Cable in its own Puma to avoid cross‑traffic interference when real‑time spikes.

Redis pub/sub: what it delivers (and what it doesn’t)

The Redis subscription adapter is the production‑grade path for multi‑node Action Cable. It uses Redis pub/sub for cross‑process coordination, supports TLS and authentication via URL, and has well‑understood reconnect behavior. With a dedicated Redis for pub/sub traffic, publish‑to‑receive latency is typically single‑digit milliseconds with low variance; 10k+ messages per second across nodes is common before CPU or NICs become the limiter. Critically, Redis avoids the ~8 KB payload cap of PostgreSQL NOTIFY and doesn’t chew up your application’s DB pool, which is why fan‑out headroom for medium/large payloads increases by a factor of roughly 3–10× in multi‑node setups.

Operationally, failover or network partitions will drop subscriptions; the adapter resubscribes on reconnect. Using TCP keepalives, appropriate client timeouts, and a dedicated instance improves recovery and prevents reconnect storms. Specific delivery guarantees beyond pub/sub semantics are not detailed here; treat Redis pub/sub as high‑speed, best‑effort fan‑out rather than a durable queue.

Enable it with:

# config/cable.yml

production:

adapter: redis

url: <%= ENV["REDIS_URL"] %>

channel_prefix: myapp_productionPostgreSQL LISTEN/NOTIFY: fit‑for‑purpose, but narrow

LISTEN/NOTIFY keeps a persistent DB connection per server process, competes for the DB pool under load, and caps payloads at about 8 KB. Latency and throughput are adequate for small or single‑node deployments with modest fan‑out and tiny messages. Beyond that, operational coupling to the database and the payload limit make it a poor match for real‑time broadcasting at scale. The path is simple—set adapter: postgresql—but the ceiling arrives quickly; most production systems benefit from Redis.

Turbo Streams: offloading rendering to protect the hot path

Server‑rendered Turbo Streams shrink client CPU and JavaScript complexity by delivering declarative DOM operations. But rendering ERB on the WebSocket hot path can stall the reactor when updates spike or fan‑out climbs. The …_later helpers solve this by moving rendering to background jobs:

class Message < ApplicationRecord

after_create_commit -> { broadcast_append_later_to "room_#{room_id}" }

endThis shift materially improves tail latency in bursty 1:100+ fan‑out scenarios. Expect a 20–50% reduction in p95 when heavy partials are rendered via …_later variants, trading head‑of‑line blocking for job‑queue latency that is typically smaller and easier to absorb.

Compression: permessage‑deflate’s bandwidth/CPU trade‑space

When both ends support it, the underlying websocket‑driver negotiates permessage‑deflate automatically—no application changes required. For compressible frames like JSON or turbo‑stream HTML, bandwidth drops by 40–80%, which often lifts sustainable messages per second by 10–30% before another bottleneck takes over. CPU overhead is modest for small messages and grows with larger frames (for example, around 32 KB), so measure before and after. If negotiation fails or a client doesn’t support the extension, the connection proceeds uncompressed without disruption.

Backpressure and slow consumers on WebSocket/TCP

TCP enforces backpressure. If kernel send buffers fill, writes slow, and slow consumers can create head‑of‑line blocking that cascades. Action Cable doesn’t enforce application‑level rate limiting, so add guardrails for inbound events and keep per‑message work small. A simple pattern uses Redis counters to throttle:

def perform(action, data)

key = "cable:ratelimit:#{current_user.id}:#{action}:#{Time.now.to_i}"

if Redis.current.incr(key) > 5

reject

else

Redis.current.expire(key, 1)

# process message

end

endOn the egress side, prefer small, compressible updates, move expensive rendering to …_later, and watch for channels whose consumers consistently lag—those are candidates for coalescing or sampling.

Subscription lifecycle correctness and reconnect cost

Correct lifecycle handling keeps resource usage predictable and reconnects cheap:

- Call stop_all_streams in unsubscribed to avoid lingering subscriptions.

- Keep connect authorization lightweight—signed cookies and a one‑time current_user lookup—so reconnect storms don’t hammer your DB.

- Align proxy/load balancer idle timeouts with the server’s ping interval (commonly at or above 60 seconds) and enable sticky sessions. Too‑short timeouts sever idle sockets and trigger avoidable reconnect loops.

Comparison Tables

Redis vs. PostgreSQL adapters for Action Cable fan‑out

| Dimension | Redis pub/sub adapter | PostgreSQL LISTEN/NOTIFY |

|---|---|---|

| Payload limits | No adapter‑level cap typical for pub/sub | ~8 KB NOTIFY payload cap |

| Cross‑node fan‑out | High; 3–10× headroom vs. PG at scale | Limited; competes with DB pool |

| Latency variance | Low when Redis is dedicated | Higher variance under DB load |

| Resource coupling | Dedicated Redis instance recommended | Consumes a DB connection per server process |

| Reconnect behavior | Stable resubscribe semantics; configure keepalives/timeouts | Tied to DB connection stability |

| Operational fit | Multi‑node production; medium/large payloads | Small, low‑scale, or single‑node apps |

Features that move the needle

| Lever | Typical impact | Caveats |

|---|---|---|

| Increase worker_pool_size from ~4 to 8–16 | 1.5–3× throughput; reduced p95/p99 until CPU/Redis/NIC saturate | Over‑sizing can worsen p95 and harm HTTP latency if sharing Puma |

| Use …_later Turbo Streams helpers | −20–50% p95 in bursty 1:100+ fan‑out with heavy partials | Adds job‑queue latency; ensure background jobs are healthy |

| Enable permessage‑deflate | −40–80% bandwidth; +10–30% sustainable msgs/sec | CPU cost rises with large frames; measure before/after |

| Switch from PG to Redis adapter | 3–10× higher fan‑out headroom; avoids DB pool contention | Requires dedicated Redis and failover discipline |

Best Practices

Shape concurrency around your CPU and workload

- Start with worker_pool_size at 8–16 when CPU headroom exists, then tune using real latency and throughput metrics.

- In Puma, use workers≈cores, threads 8–16, and preload_app!. If HTTP endpoints suffer during real‑time spikes, isolate Action Cable in its own Puma.

Make broadcasts small, compressible, and off the hot path

- Use Turbo Streams for server‑rendered DOM changes; prefer …_later variants for render‑heavy updates.

- Coalesce or sample bursts when the same stream receives frequent updates.

- Keep payloads compressible (JSON, HTML snippets) to benefit from permessage‑deflate.

Treat Redis as a first‑class transport

- Run a dedicated Redis for pub/sub traffic; avoid mixing with heavy key/value workloads or unnecessary fsync configurations.

- Configure TCP keepalive and client timeouts to speed failure detection.

- Monitor Redis CPU and network utilization alongside application metrics.

Harden the edge

- Configure the load balancer to pass Upgrade: websocket, enable sticky sessions, and set idle timeouts above the ping interval.

- Watch reconnect rates; unexpected spikes often trace back to idle timeouts or LB redeploys.

Build in flow control and guardrails

- Rate‑limit inbound events using Redis counters or middleware patterns.

- Keep per‑message channel work small; heavy operations belong in background jobs.

- Track slow consumers and adjust broadcast strategies as needed.

Observe what matters and tune iteratively

- Subscribe to Active Support notifications for per‑channel and per‑action durations, queue depths, connection counts, and errors.

- Add tracing around channel perform actions and broadcast calls; correlate with Redis client instrumentation to localize latency.

- Validate any tuning change by comparing p50/p95 latency, delivered msgs/sec at a fixed drop/error rate, egress bandwidth, and reconnect rates.

Keep lifecycle and auth cheap

- Ensure stop_all_streams runs in unsubscribed to prevent leaks.

- Use signed/encrypted cookies and memoized user lookup on connect; avoid per‑message DB checks.

- Restrict allowed_request_origins to reduce negotiation overhead and abuse surface.

Practical Examples

# config/environments/production.rb

# Start conservatively, then tune with metrics

config.action_cable.worker_pool_size = 12# config/cable.yml

production:

adapter: redis

url: <%= ENV["REDIS_URL"] %> # supports TLS/auth via URL

channel_prefix: myapp_production# Turbo Streams: offload heavy rendering

class Message < ApplicationRecord

after_create_commit -> { broadcast_append_later_to "room_#{room_id}" }

end# Instrumentation for metrics

ActiveSupport::Notifications.subscribe(/action_cable/) do |name, start, finish, id, payload|

duration_ms = (finish - start) * 1000

# export counts, durations, failures, per-channel stats

end// @rails/actioncable client with sane defaults

import { createConsumer } from "@rails/actioncable"

const consumer = createConsumer("wss://example.com/cable")

const channel = consumer.subscriptions.create(

{ channel: "RoomChannel", id: 42 },

{

connected() { /* ready */ },

disconnected() { /* automatic backoff & retry */ },

received(data) {

// Batch DOM updates where possible

requestAnimationFrame(() => {

// apply update (or let Turbo Streams do it)

})

}

}

)Interpreting Performance: p50/p95, throughput ceilings, and bottleneck shifts

Focus on changes that improve delivered messages at a fixed error/drop rate without destabilizing reconnect behavior:

- Track connection‑to‑receive latency (embed server timestamps in payloads) and compute p50/p95. Expect p95 to tighten as you raise worker_pool_size from an undersized baseline and as you offload rendering to …_later.

- Measure delivered msgs/sec under steady and bursty loads. Compression often buys 10–30% more throughput before the next limit.

- Monitor egress bandwidth. With permessage‑deflate, bandwidth typically drops 40–80% on compressible frames; this translates into lower NIC utilization and more headroom.

- Watch CPU on app nodes and Redis. As you remove bottlenecks (e.g., worker pool saturation), the next one will surface—commonly CPU or network, sometimes Redis itself.

- Track reconnect rates during normal operation and induced failures (Redis restart, LB drain). Proper keepalives/timeouts and sticky sessions reduce reconnect spikes and shorten recovery.

Use this loop to tune toward your SLOs: increase the worker pool until CPU approaches safe limits; move rendering off the hot path; enable compression; confirm Redis isn’t contended; and revisit load balancer timeouts. Expect gains to be stepwise—each improvement will shift the bottleneck, not eliminate it.

Conclusion

Rails 7.1+ gives Action Cable a pragmatic recipe for deterministic real‑time performance: keep I/O nonblocking, push work into a right‑sized thread pool, rely on Redis pub/sub for cross‑node fan‑out, and use Turbo Streams to move heavy rendering off the hot path. WebSocket compression stretches bandwidth, and disciplined backpressure handling keeps slow consumers from poisoning the well. The operational playbook—sticky sessions, sensible idle timeouts, a dedicated Redis, and metrics via Active Support notifications—turns these building blocks into predictable p95/p99 and healthy reconnect behavior at scale.

Key takeaways:

- Tune the Action Cable worker pool first; it often delivers the largest p95/throughput improvements.

- Prefer Redis pub/sub for multi‑node fan‑out; LISTEN/NOTIFY remains a small‑scale option due to payload limits and DB coupling.

- Move render‑heavy broadcasts to …_later; it protects the reactor and tightens tail latency.

- Enable permessage‑deflate to trade modest CPU for significant bandwidth and throughput gains.

- Measure relentlessly: p50/p95, delivered msgs/sec, egress bandwidth, Redis and app CPU, and reconnect rates.

Next steps for teams:

- Benchmark your current setup, then raise worker_pool_size with CPU headroom and re‑measure.

- Migrate to the Redis adapter if you still ride on LISTEN/NOTIFY.

- Audit Turbo Streams broadcasts and shift heavy ones to …_later variants.

- Verify permessage‑deflate negotiation and LB sticky/timeout settings.

- Instrument Action Cable events and add tracing to correlate app, Redis, and network latency.

With these practices in place, real‑time Rails scales to thousands of concurrent connections and high message rates while maintaining crisp, consistent latency—no heroics required. 🚀